Snowflake snowpro advanced architect practice test

snowpro advanced architect

Question 1

A healthcare company wants to share data with a medical institute. The institute is running a Standard edition of Snowflake; the healthcare company is running a Business Critical edition.

How can this data be shared?

- A. The healthcare company will need to change the institutes Snowflake edition in the accounts panel.

- B. By default, sharing is supported from a Business Critical Snowflake edition to a Standard edition.

- C. Contact Snowflake and they will execute the share request for the healthcare company.

- D. Set the share_restriction parameter on the shared object to false.

Answer:

c

Question 2

A company has several sites in different regions from which the company wants to ingest data.

Which of the following will enable this type of data ingestion?

- A. The company must have a Snowflake account in each cloud region to be able to ingest data to that account.

- B. The company must replicate data between Snowflake accounts.

- C. The company should provision a reader account to each site and ingest the data through the reader accounts.

- D. The company should use a storage integration for the external stage.

Answer:

a

Question 3

Files arrive in an external stage every 10 seconds from a proprietary system. The files range in size from 500 K to 3 MB. The data must be accessible by dashboards as soon as it arrives.

How can a Snowflake Architect meet this requirement with the LEAST amount of coding? (Choose two.)

- A. Use Snowpipe with auto-ingest.

- B. Use a COPY command with a task.

- C. Use a materialized view on an external table.

- D. Use the COPY INTO command.

- E. Use a combination of a task and a stream.

Answer:

ae

Question 4

How can an Architect enable optimal clustering to enhance performance for different access paths on a given table?

- A. Create multiple clustering keys for a table.

- B. Create multiple materialized views with different cluster keys.

- C. Create super projections that will automatically create clustering.

- D. Create a clustering key that contains all columns used in the access paths.

Answer:

b

Question 5

Which security, governance, and data protection features require, at a MINIMUM, the Business Critical edition of Snowflake? (Choose two.)

- A. Extended Time Travel (up to 90 days)

- B. Customer-managed encryption keys through Tri-Secret Secure

- C. Periodic rekeying of encrypted data

- D. AWS, Azure, or Google Cloud private connectivity to Snowflake

- E. Federated authentication and SSO

Answer:

bd

Question 6

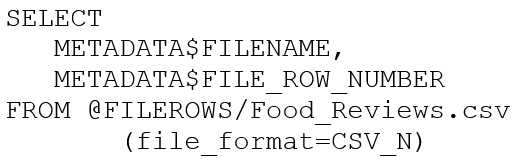

An Architect runs the following SQL query:

How can this query be interpreted?

- A. FILEROWS is a stage. FILE_ROW_NUMBER is line number in file.

- B. FILEROWS is the table. FILE_ROW_NUMBER is the line number in the table.

- C. FILEROWS is a file. FILE_ROW_NUMBER is the file format location.

- D. FILERONS is the file format location. FILE_ROW_NUMBER is a stage.

Answer:

a

Question 7

A companys client application supports multiple authentication methods, and is using Okta.

What is the best practice recommendation for the order of priority when applications authenticate to Snowflake?

- A. 1. OAuth (either Snowflake OAuth or External OAuth)2. External browser3. Okta native authentication4. Key Pair Authentication, mostly used for service account users5. Password

- B. 1. External browser, SSO2. Key Pair Authentication, mostly used for development environment users3. Okta native authentication4. OAuth (ether Snowflake OAuth or External OAuth)5. Password

- C. 1. Okta native authentication2. Key Pair Authentication, mostly used for production environment users3. Password4. OAuth (either Snowflake OAuth or External OAuth)5. External browser, SSO

- D. 1. Password2. Key Pair Authentication, mostly used for production environment users3. Okta native authentication4. OAuth (either Snowflake OAuth or External OAuth)5. External browser, SSO

Answer:

b

Question 8

A Snowflake Architect is designing a multi-tenant application strategy for an organization in the Snowflake Data Cloud and is considering using an Account Per Tenant strategy.

Which requirements will be addressed with this approach? (Choose two.)

- A. There needs to be fewer objects per tenant.

- B. Security and Role-Based Access Control (RBAC) policies must be simple to configure.

- C. Compute costs must be optimized.

- D. Tenant data shape may be unique per tenant.

- E. Storage costs must be optimized.

Answer:

ce

Question 9

An Architect would like to save quarter-end financial results for the previous six years.

Which Snowflake feature can the Architect use to accomplish this?

- A. Search optimization service

- B. Materialized view

- C. Time Travel

- D. Zero-copy cloning

- E. Secure views

Answer:

d

Question 10

What are some of the characteristics of result set caches? (Choose three.)

- A. Time Travel queries can be executed against the result set cache.

- B. Snowflake persists the data results for 24 hours.

- C. Each time persisted results for a query are used, a 24-hour retention period is reset.

- D. The data stored in the result cache will contribute to storage costs.

- E. The retention period can be reset for a maximum of 31 days.

- F. The result set cache is not shared between warehouses.

Answer:

bce